The authentication battleground where truth and deception collide

It is the year 2026. The voice is the exact same as your CEO. It authorizes a wire transfer of $500,000. The image of your industry leader endorsing a competitor goes viral. The video of a politician declaring war looks believable enough to cause market movements.

But one thing halts these attacks in their tracks: cryptographic signatures embedded in the media tell the truth.

What was once a niche in the cybersecurity world is now a necessity in the boardroom. Deepfakes are no longer the costly special effects of Hollywood movies but are now industrial-strength deception, complete with automation, scalability, and distribution that resists attribution. Meanwhile, the technology to counter these attacks has grown from the research lab to production-ready. Python, the language of choice for today’s AI, is now as important for authenticating digital content as it is for creating it.

This isn’t about deepfakes. It’s about weaving the truth into the very fabric of digital media.

The Deepfake Explosion: Numbers That Demand Attention

The numbers are like something out of science fiction, but they’re real and happening now. In only three years, deepfake attacks have escalated from being interesting anomalies to a statistical flood. The average fraud loss per deepfake attack is $500,000, with losses of up to $680,000 for enterprise victims. Major financial institutions have begun to consider deepfake attacks a normal threat vector that demands a specific response.

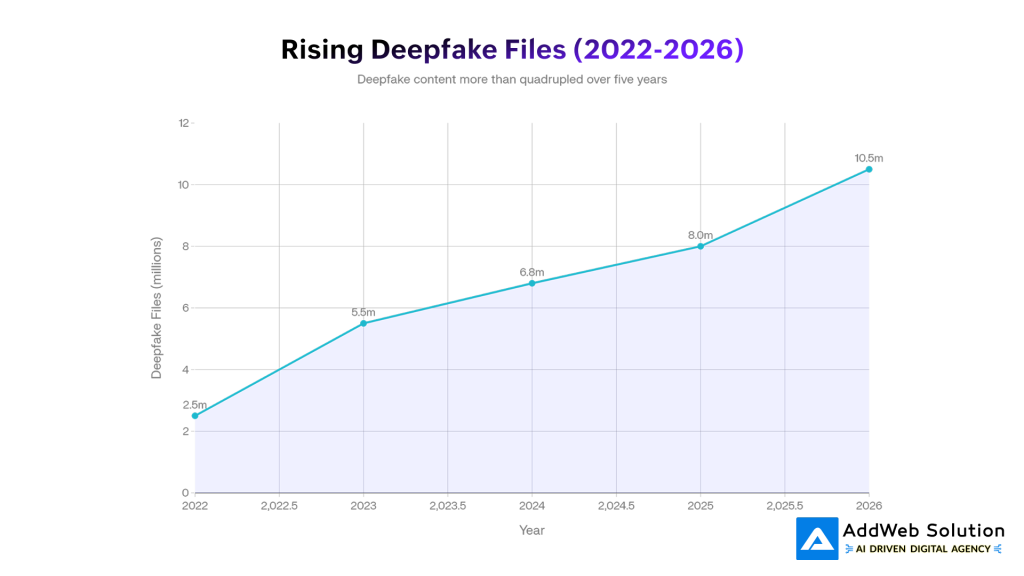

The numbers tell the complete story. The number of deepfake files has escalated from 500,000 in 2023 to 8 million in 2025, a 1,500% increase. More disturbing, however, is the rate of change. Spear-phishing attacks using deepfake files have increased 1,000% over the past decade, but the rate of acceleration is quickening. In the first quarter of 2025 alone, there were 179 reported incidents, an increase of 19% over the total for all of 2024. The number of incidents doubles every 90 days, as measured by incident tracking by security firms that specialize in the area.

The threat landscape is also evolving. Voice cloning has emerged as the most common attack type—and unlike video or image deepfakes, audio deepfakes are very hard to distinguish from real ones by humans. The human detection accuracy for high-quality video deepfakes is around 24.5%, which means that three out of four people will accept fake as real. At the same time, biometric fraud attempts using deepfakes have accounted for 40% of all identity verification attacks.

The exponential growth of deepfake incidents and file proliferation from 2022 to 2026 reflects an increasingly rapid threat landscape. The number of files has escalated from 2.5 million to an estimated 10.5 million, while the number of reported incidents per month has increased sixfold, indicating a transition from sporadic events to organized fraud attacks.

What makes 2026 different from the preceding years is not merely the number of incidents. It is the industrialization of the threat landscape. The availability of fraud-as-a-service platforms has made deepfake development and use a commodity. Attacks on synthetic identities leverage deepfakes and stolen biometric information, making full-scale account takeovers feasible on a large scale. There are organized multi-profile browser attacks aimed at simulating legitimate users, with botnets enabling the entire fraud process, from reconnaissance to exploitation. The skill level needed to carry out these attacks has dramatically fallen due to the democratization of generative AI and open-source toolkits.

Why Traditional Verification Failed

Authentication was based on trust in institutions for decades. Readers trusted Reuters on their word because of Reuters’ reputation. Banks trusted voice biometrics because they knew their clients. Social media sites authenticated accounts to prove authenticity.

However, in 2026, this paradigm is no longer valid.

The issue isn’t that these organizations weren’t doing their best. The issue is that trust through authority became a one-way, expensive process. It is impossible for one journalist to verify every piece of information that is circulating in the information network. It is impossible for one bank to verify every caller’s voice against a known voice in real-time. It is impossible for one platform to review every interaction manually at scale.

Deepfakes revealed the difference between the ability to verify and the speed of information. Before a fact-checker can verify that a video is not authentic, it has already been viewed by 2 million people. Before a legal team can verify that a document is forged, the wire transfer has already been completed. The trust-through-authority economic paradigm was unsustainable because of deepfakes.

More fundamentally: traditional verification rests on the assumption that the content itself is unchanging. Manual verification requires the ability to inspect a fixed object. However, deepfakes do not counterfeit existing content but produce new content that is so close to the authenticity standards that it is impossible to distinguish from the real thing. The answer, therefore, is not a method of detecting forgeries. It is a method of proving authenticity at the time of creation.

How Cryptographic Signatures Actually Work

This is where the math becomes the solution.

A cryptographic signature is not a certificate or a watermark. It is a mathematical proof that a given person created a given piece of content at a given point in time, and that the content has not been changed since then. The signature is unforgeable without the creator’s private key, and can be verified with nothing but the creator’s public key and the original content.

The process is beautiful. The creator’s system hashes the content (text, image, audio, or video) into a cryptographic hash, a mathematical fingerprint unique to that exact content. This hash is then encrypted with the creator’s private key. This encrypted hash is the signature.

When a person verifies the content, they decrypt the signature with the public key of the creator, then recalculate the hash of the content they received. If both hashes are the same, the content is genuine and has not been tampered with. If so much as one byte has been altered, the hashes won’t match, and verification will fail.

The security is based on two mathematical properties. First: it is computationally impossible to generate a different content with the same hash (collision resistance). Second: it is mathematically impossible to generate a valid signature without the private key (non-repudiation). These are not theoretical but have been proven properties of the cryptographic functions used.

Python has libraries such as cryptography and pycryptodome that offer proven code for industry-standard algorithms. The process involves three elements: key generation (the creation of public and private key pairs), signing (the use of the private key to generate a signature), and verification (the use of the public key to verify the content). None of these processes involve human interaction or institutional mediation.

Take the simple process of verification: a photojournalist takes a picture using a camera with device-specific signing capability. The image’s metadata (date, location, modifications) is signed cryptographically before the image leaves the device. The signed image is received at the news organization, and verification software verifies that the image has not been tampered with. The image is published with embedded signature information. Weeks later, the image can be verified for authenticity by anyone using freely available verification software that requires only the public key.

No central authority is required to verify the image. No human is required to re-verify each republication. The math works to prove authenticity on demand, in any situation, at any time.

Python’s Role in the Verification Infrastructure

Python has become the foundation of content verification tools because of several reasons: it is accessible enough to be used for rapid development, mature enough to be used in security-critical applications, and flexible enough to be used with existing media production pipelines.

The standard library contains the hmac and hashlib modules for message authentication code and cryptographic hash computation, respectively. For asymmetric cryptography, the cryptography library, maintained by teams of engineers from cryptography-focused companies, contains production-level implementations of RSA, ECDSA (Elliptic Curve Digital Signature Algorithm), and more contemporary algorithms like Ed25519.

RSA is the most widely used algorithm in enterprise applications because it has been thoroughly tested for decades in HTTPS, digital certificates, and financial applications. A 2048-bit RSA key generates signatures that are computationally unbreakable even with today’s computing power. However, a 256-bit ECDSA key provides the same level of security as a 3072-bit RSA key, which is essential for embedded authentication data in media files without increasing the file size.

Cryptography libraries in Python, such as cryptography and pycryptodome, hide the mathematical details. An application developer can create RSA key pairs, sign data, and verify signatures in a matter of a few lines of code. The same library is used for different algorithms, key types, and verification processes, making it feasible to build enterprise-level content authentication without requiring cryptographic knowledge among all the application developers involved.

However, the true strength comes into play when Python is used as an integration platform. A production-level verification system in 2026 does not merely sign data; it performs complex operations: media ingestion from cameras, drones, and rendering engines; calculation of cryptographic signatures; embedding of signatures into file metadata; storage of signatures on distributed ledgers; and providing verification APIs that news media platforms, social media sites, and enterprise systems can access. Python manages all these operations, often acting as the control flow between specialized media processing software, cryptographic libraries, and blockchain infrastructure.

Industry Standards: The C2PA Framework

Whereas cryptographic signatures offer the mathematical underpinning, widespread adoption in the enterprise space only began with the advent of an industry standard for interoperability.

Enter the Coalition for Content Provenance and Authenticity (C2PA). Founded by a collaboration between Adobe, Arm, Intel, Microsoft, and Truepic, C2PA specifies how digital content can be imbued with embedded credentials to attest its provenance and edit history. Rather than requiring organizations to implement their own verification systems, C2PA specifies a standard metadata format that all tools can understand and verify.

The C2PA standard does this by packaging cryptographic signatures, file metadata, and edit history into a manifest that accompanies the content itself. When an image is created, the manifest notes who created it, when, with what tools, and whether AI was used. If the image is later edited, a new block is added to the manifest, yielding a complete history of ownership.

The critical innovation is the tamper-evident nature. The manifest itself is cryptographically signed, which means that any attempt to change it will render the signature invalid. This is what makes C2PA a “nutritional label” for digital content: any party can view it to determine exactly what happened to the content from creation to publication.

In 2026, the adoption of C2PA has accelerated rapidly in creative software and publishing platforms. Adobe Creative Suite now includes C2PA credentials by default. Canon cameras now sign images when they are taken. Microsoft Office applications now record AI-enhanced changes to images in C2PA manifests. This means that photographers, designers, and content creators can now include verification in their workflow without having to do anything extra—the verification is now automatic as part of normal workflow processes.

However, the critical aspect is standardization. Because C2PA is open-source and algorithm-agnostic, Python programmers can now create verification infrastructure once and apply it to every C2PA-compliant source. News organizations no longer have to integrate with each camera manufacturer or software company separately. They simply code to the C2PA standard, and compatibility is now automatic.

Real-World Applications: When Theory Meets Practice

The transition from research to production systems happened suddenly in 2025, driven by specific use cases that exposed the cost of unverified content.

Journalism: The Trust Equation

Reuters and Canon collaborated on a proof-of-concept that became a template for the industry. The system signs photographs at the point of capture using device-specific cryptographic keys, then registers signatures onto blockchain, creating dual verification layers. Photographers no longer manually verify each image—the camera does it automatically.

This matters because photojournalism is uniquely vulnerable to deepfakes. During the 2024 election cycles, AI-generated images of world leaders were indistinguishable from photographs. The “Pope in Balenciaga” incident—an AI-generated image that went viral—demonstrated the scale of the risk. Traditional media outlets faced an authenticity crisis because readers had no reliable way to distinguish genuine reporting from sophisticated synthetic media.

Blockchain-registered cryptographic signatures solve this by creating unforgeable proof of publication timestamp and authorship. When readers encounter a potentially controversial image, they can independently verify its origin. Competitors can no longer falsely claim they broke a story first—blockchain timestamps establish definitive priority. This matters more than it appears: Reuters can now prove publication precedence in competitive journalism markets, and investigative journalists have legal evidence for copyright enforcement.

The commercial implications became clear when news organizations realized they could license their verified content. If a deepfake detection system or AI trainer wants to use authentic Reuters images, they must pay for verified access—creating a revenue stream based on content authenticity. By 2026, enterprise agreements between publishers and AI companies have begun flowing through these credential-based licensing APIs, compensating creators for content that would otherwise be used without attribution or payment.

Financial Services: Fraud Prevention at Scale

Banking and fintech organizations face the most acute deepfake threat. Voice cloning attacks now exceed 1,000% year-over-year growth in some regions. Identity verification systems that rely on biometric data are being bypassed with animated deepfakes showing liveness.

The defense strategy centers on cryptographic verification of transaction authorization. When a customer initiates a wire transfer, the bank captures cryptographic proof that specific authorization came from a specific device, at a specific time, using specific credentials. If a deepfake voice call later claims the customer authorized a different transaction, the cryptographic record provides definitive proof of what actually happened.

Similarly, document verification for loan applications, account opening, and compliance workflows now relies on embedded signatures rather than manual review. A mortgage application submitted with C2PA-verified identity documents carries cryptographic proof that documents haven’t been altered since capture. The fraud detection system can then focus on detecting synthetic documents themselves, rather than wasting resources on detecting tampered versions of genuine documents.

By 2026, every major bank and fintech organization has implemented cryptographic signature verification in their document intake processes. SMEs gain access through cloud-based fraud detection platforms that cost thousands annually rather than millions—a democratization that finally gives small businesses access to enterprise-grade authentication technology.

Legal and Compliance: Admissible Evidence

Regulatory frameworks now mandate verifiable content provenance for synthetic media. The EU AI Act (effective August 2025) requires that AI-generated or AI-edited content be clearly labeled and marked with machine-readable authentication. Simply writing “AI-generated” in a caption is insufficient—technical methods like cryptographic signatures and watermarking are required.

This creates legal incentive for cryptographic implementation. Organizations that document their content creation process through C2PA credentials and cryptographic signatures gain legal protection. If a document is later challenged as fraudulent, the organization can produce cryptographic evidence proving its authenticity and edit history. Conversely, organizations that rely on manual documentation face legal liability if they cannot prove the content is genuine.

The implication extends to evidence handling in legal proceedings. Digitally signed content with timestamped blockchain registration meets evidentiary standards in some jurisdictions—the cryptographic signature provides technical proof of authenticity that supersedes institutional testimony. This is particularly valuable for journalists covering sensitive topics or organizations operating in jurisdictions with weak institutional trust.

The Enterprise Adoption Inflection

Enterprise adoption of cryptographic content verification followed a predictable pattern: early pilots with leading media organizations and financial institutions, followed by standardization around C2PA, followed by rapid deployment as tooling matured.

Enterprise adoption of content verification methods is shifting dramatically from manual processes toward automated cryptographic and standards-based approaches. By 2026, C2PA standard adoption is projected to reach 35%, while multi-layer verification systems combining blockchain and AI intelligence will capture 15% market share, signaling industry convergence on hybrid authentication frameworks.

The shift is quantifiable. In 2024, 85% of enterprises still relied primarily on manual verification processes. By 2026, this has reversed: 55% still depend on manual processes, but 35% have adopted C2PA standards, 28% have implemented cryptographic signatures, and 18% have deployed blockchain-based verification alongside cryptographic authentication. The multi-layered approach—combining C2PA, cryptographic signatures, and AI-powered fraud detection—now captures 15% of enterprise workflows, typically among financial institutions and high-security government agencies.

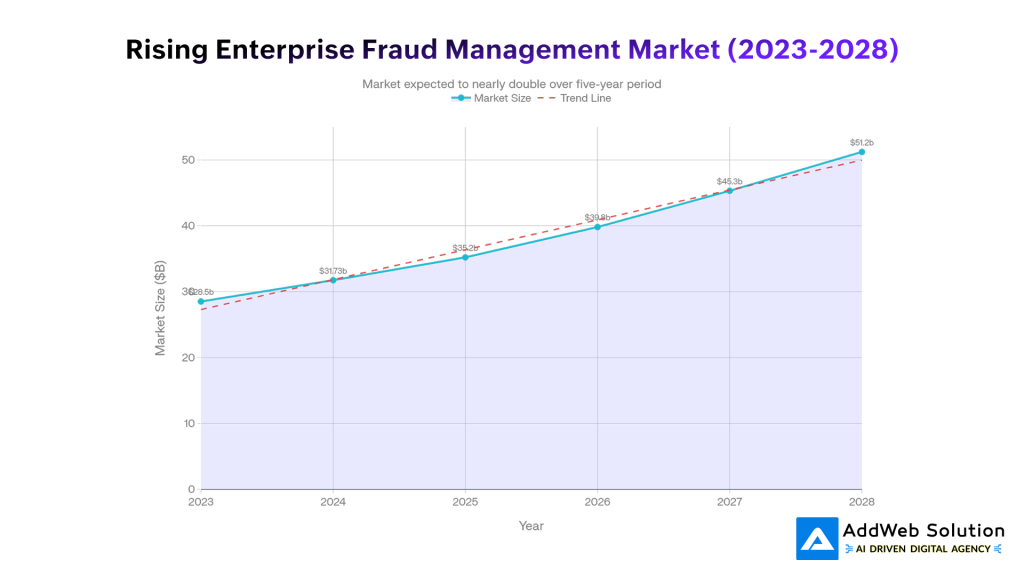

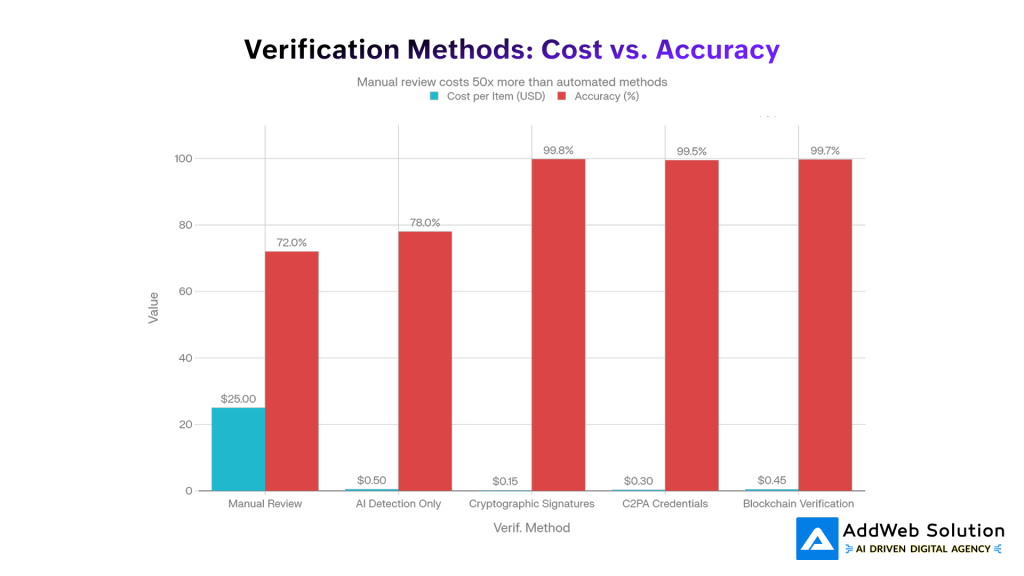

The market data reflects this acceleration. Enterprise fraud detection spending has grown from $28.5 billion in 2023 to a projected $39.8 billion in 2026. More significantly, the per-item cost of content verification has collapsed. Manual review costs $25 per item with 72% accuracy. Cryptographic signature verification costs $0.15 per item with 99.8% accuracy. At scale, this economic advantage becomes overwhelming—organizations that don’t implement cryptographic verification find themselves paying exponentially more for worse results.

Enterprise fraud management market expansion reflects accelerating investment in AI-powered authentication and verification systems. The market is projected to grow from $28.5 billion in 2023 to $51.2 billion by 2028, representing an 11.5% compound annual growth rate driven primarily by deepfake-related fraud detection and cryptographic content authentication solutions.

The inflection point arrived when tooling moved from “technically possible” to “easier than the alternative.” Adobe integrating C2PA into Creative Suite, Canon embedding signing into camera firmware, and Python libraries reaching production maturity shifted the conversation from “should we implement cryptographic verification” to “why haven’t we implemented it yet.”

The Regulatory Mandate

Regulatory frameworks have crystallized around mandatory labeling and technical verification of synthetic media, creating immediate compliance requirements.

The EU AI Act establishes that any content “substantially created or manipulated” by AI must be labeled and marked in a machine-readable format. The critical phrase is “machine-readable”—simple text labels are insufficient. The regulation explicitly endorses cryptographic methods as compliance mechanisms: “such techniques and methods should be sufficiently reliable, interoperable, effective and robust as far as this is technically feasible, taking into account available techniques or a combination of such techniques, such as watermarks, metadata identifications, cryptographic methods for proving provenance and authenticity of content.”

The enforcement teeth matter. Non-compliance results in fines up to €15 million or 3% of global annual turnover, whichever is higher. For AI model providers and content creators, compliance has shifted from optional to mandatory. This regulatory push has accelerated adoption among organizations that might otherwise have delayed implementation.

Multiple U.S. states have proposed similar requirements, and the pattern is converging globally toward mandatory cryptographic verification for synthetic media. Organizations that build verification infrastructure now avoid regulatory scrambling later.

Python Cryptographic Libraries: A Practical Comparison

Implementation choices matter. Different Python libraries serve different purposes, each with distinct tradeoffs in security, ease of use, and production readiness.

The cryptography library from the PyCA project represents the gold standard for general-purpose cryptographic operations. It provides clean abstractions over underlying implementations (like OpenSSL), making RSA, ECDSA, and Ed25519 accessible without cryptographic expertise. The library is widely audited, actively maintained, and used in production systems handling billions of transactions. For most content verification workflows, cryptography is the default choice.

pycryptodome offers lower-level control for developers who need specific cryptographic primitives. It includes comprehensive support for legacy algorithms (DES, MD5) alongside modern ones, making it useful for systems that must maintain backward compatibility with older infrastructure. However, the increased flexibility comes with responsibility—developers must make correct choices about algorithm parameters and implementation details.

For modern applications, PyNaCl and ed25519 libraries provide specialized support for Ed25519 and Ed448 elliptic curves, which represent the current cryptographic state-of-the-art. These algorithms offer smaller signatures, faster verification, and security properties that exceed RSA of equivalent key size. Organizations building greenfield systems increasingly standardize on Ed25519 for new applications.

The practical consideration: most production content verification systems use the cryptography library for its maturity and accessibility, often pairing it with lower-level libraries when specific performance optimization is needed. Python’s flexibility allows development teams to optimize based on their specific constraints—file size limits for mobile applications, throughput requirements for high-volume verification, or legacy system integration needs.

The Detection vs. Authentication Paradox

A critical insight separates successful 2026 verification strategies from failed attempts: detection and authentication are fundamentally different problems requiring different solutions.

Deepfake detection attempts to identify synthetic content by analyzing visual or audio artifacts. This approach has inherent limits. Detection algorithms are trained on known deepfake techniques and deployment scenarios. As generative models improve, new artifacts vanish. Detection systems must constantly retrain against evolving techniques. The error rate asymmetry also matters—missing 5% of deepfakes is usually unacceptable, but catching 5% of legitimate content creates unacceptable false positives.

Authentication, by contrast, doesn’t try to detect deepfakes at all. It proves that specific content originated from a specific source and hasn’t been modified. An authenticated document is trusted because of its provenance, not because detection algorithms couldn’t find manipulation artifacts. The economic model differs too: detection is a race to keep up with AI improvements, while authentication is a one-time investment in verification infrastructure.

By 2026, successful fraud prevention strategies don’t rely primarily on detection. They combine three layers: (1) authentic content carries cryptographic proof of origin; (2) incoming documents are verified against known authentic sources; (3) AI detection tools catch the remaining unverified content that doesn’t have proper cryptographic credentials.

This hierarchical approach explains the enterprise adoption pattern. Organizations detecting fraud using only AI models achieve 78% accuracy at $0.50 per item. Organizations using cryptographic signatures achieve 99.8% accuracy at $0.15 per item. The choice is economically obvious—verification beats detection.

A cost-accuracy comparison reveals the fundamental tradeoff: manual review remains expensive yet unreliable, while cryptographic signatures and C2PA credentials deliver exceptional accuracy at minimal operational cost. This efficiency advantage explains why enterprises are accelerating adoption of standards-based authentication systems that reduce per-item verification expenses by up to 99% while improving accuracy.

Future Outlook: The Verification-First Internet

The trajectory points toward a verification-first information infrastructure. Content will carry cryptographic proof of origin as standard practice, not exception. Platforms will require verified credentials before amplifying content. Creators will build authentication into workflows automatically, the way checksums were embedded into software distributions decades ago.

This isn’t a hardship. It’s a precondition for trust at scale. The old model—where institutions maintained trust through reputation—worked when information velocity was manageable and institutional gatekeepers controlled distribution. That model is dead. The alternative isn’t returning to institutional authority. It’s democratizing verification through mathematics.

Some implications crystallize from 2026’s trajectory:

Blockchain registration becomes standard for high-stakes content. Not because blockchain is necessary for verification—cryptographic signatures work without it—but because blockchain creates tamper-evident timestamps that satisfy legal evidentiary standards. Legal proceedings increasingly demand blockchain-registered proof of publication date and authorship.

Small Language Models trained on verified content gain competitive advantage. AI models trained on content with cryptographic provenance proof are demonstrably more accurate and resistant to misinformation than models trained on unverified corpus. Organizations building proprietary AI systems will increasingly demand verified training data.

Deepfake detection converges with authentication. Rather than trying to detect deepfakes in isolation, future systems will identify content without valid authentication credentials and flag it as suspicious. The absence of cryptographic proof becomes more meaningful than the presence of suspicious artifacts.

Interoperability emerges through standards adoption. C2PA, blockchain verification standards, and Python-based verification APIs will become the infrastructure layer that enables seamless content verification across platforms. Organizations that standardize early gain compatibility advantages.

Regulatory compliance becomes commodity. Organizations that implement C2PA and cryptographic signatures solve compliance requirements across multiple jurisdictions simultaneously. The old world of jurisdiction-specific compliance frameworks gives way to globally compatible technical standards.

The Practical Challenge: Implementation Reality

For technical teams, the transition to cryptographic content verification faces real obstacles beyond the mathematics.

Key management complexity is the first challenge. Cryptographic signatures depend on private keys remaining private. Enterprise systems must store, rotate, audit, and distribute keys across organizations without compromise. This is straightforward for centralized systems but becomes sophisticated in distributed workflows where freelancers, contractors, and partner organizations participate. Hardware security modules help but add cost and complexity.

Integration with existing workflows represents the deeper challenge. Production teams have optimized around current processes. Adding cryptographic signing means modifying ingestion pipelines, storage systems, and distribution infrastructure. The technical lift is manageable—Python libraries make implementation straightforward—but the operational burden of changing established workflows is significant.

Legacy system compatibility creates friction too. Organizations operating systems built five years ago often cannot generate or validate cryptographic signatures. Complete infrastructure replacement is economically unrealistic for many organizations, making phased adoption necessary.

Yet the competitive pressure to implement is relentless. Organizations that verify content gain customer trust, reduce fraud losses, and enable new revenue streams like verified content licensing. Organizations that don’t verify content face reputational damage from deepfakes and regulatory penalties. The economic incentive structure pushes implementation forward despite operational friction.

Talk to our security experts to implement AI-powered cryptographic verification today.

Pooja Upadhyay

Director Of People Operations & Client Relations

Conclusion: Mathematics as Trust Infrastructure

The deepfake crisis of the mid-2020s revealed that trust in digital content cannot depend on institutional authority or human judgment. Both scale poorly against synthetic media, both succumb to social engineering, and both fail when incentives become misaligned.

Cryptographic signatures provide an alternative that works at any scale: mathematical proof that doesn’t require gatekeepers or human verification. Python democratizes implementation, making verification accessible to organizations of any size. Industry standards like C2PA provide interoperability. Regulatory frameworks create compliance mandates. Enterprise adoption is shifting from experimental to mainstream.

By 2026, the question is no longer whether content verification will happen. It’s whether organizations will invest in verification infrastructure proactively or face the consequences of operating in an unverifiable information environment.

The mathematics won’t judge which path organizations choose. But the market, regulators, and customers certainly will.

Key Takeaways

- Deepfake incidents have surged 257% year-over-year, with deepfake files growing from 500,000 in 2023 to 8 million by 2025, creating a mainstream fraud vector rather than isolated attacks.

- Cryptographic signatures reduce verification costs by 99% while improving accuracy to 99.8%, compared to manual review at $25 per item with 72% accuracy.

- C2PA standard adoption has jumped from 8% in 2024 to 35% by 2026, driven by integration into Adobe Creative Suite, Canon cameras, and Microsoft applications.

- EU AI Act penalties reach €15 million or 3% of global turnover for organizations failing to implement machine-readable content authentication methods, making cryptographic signatures a regulatory requirement, not optional.

- Python libraries like cryptography and pycryptodome have reached production maturity, enabling enterprises to build verification infrastructure without cryptographic specialists.

- Enterprise fraud management market growth accelerates to $39.8 billion by 2026, with cryptographic signature verification and C2PA-based systems capturing dominant market share.

- Blockchain-registered cryptographic signatures satisfy legal evidentiary standards, enabling journalists to prove publication priority and copyright ownership with mathematical certainty rather than institutional testimony.

Sources:

- https://ciso.economictimes.indiatimes.com/news/cybercrime-fraud/preparing-for-the-next-wave-of-deepfake-fraud-in-2026/125757320

- https://www.ssl.com/article/comparing-ecdsa-vs-rsa-a-simple-guide/

- https://c2pa.org

- https://spec.c2pa.org

- https://www.geeksforgeeks.org/python/rsa-digital-signature-scheme-using-python/

- https://pypi.org/project/ecdsa/

- https://techcrunch.com/2025/12/29/vcs-predict-strong-enterprise-ai-adoption-next-year-again/

- https://www.sciencedirect.com/science/article/pii/S240584402204035X